Chatbots: 3 years on, what have we learnt?

Three years ago, the Digital Innovation team started exploring the world of chatbots. We have developed many Alexa skills and chatbots for various part of EDF Energy's business; find out more in our previous blog.

However, designing our first conversational experience, the EDF Energy Alexa skill, taught us a lot! We've discovered that rapidity is key to a good user experience. While it might be (just about) acceptable to experience a few seconds pause on social messaging platforms, it isn't an acceptable user experience on a voice platform. Unlike screen-led device such as mobiles, with Amazon Echo or Google Home, users tend to ask questions as they go about their routine, they aren’t sitting watching for results, they’re expecting answers in real-time, in a way that mirrors typical human-to-human conversation patterns.

Ensuring a use-case is appropriate for a platform is important, for example; we could design an Alexa skill that allows our customers to change their energy tariff, but it would probably be more confusing than doing it on our website without visual cues and be an information overload experience!

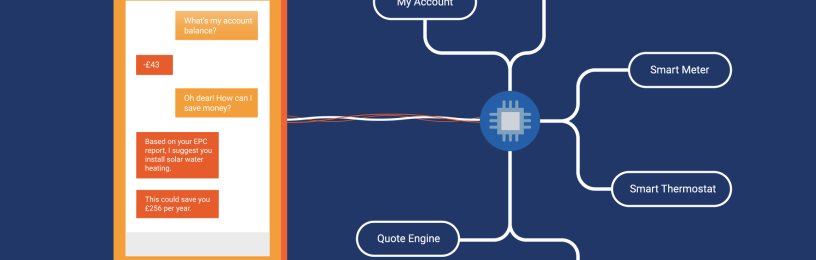

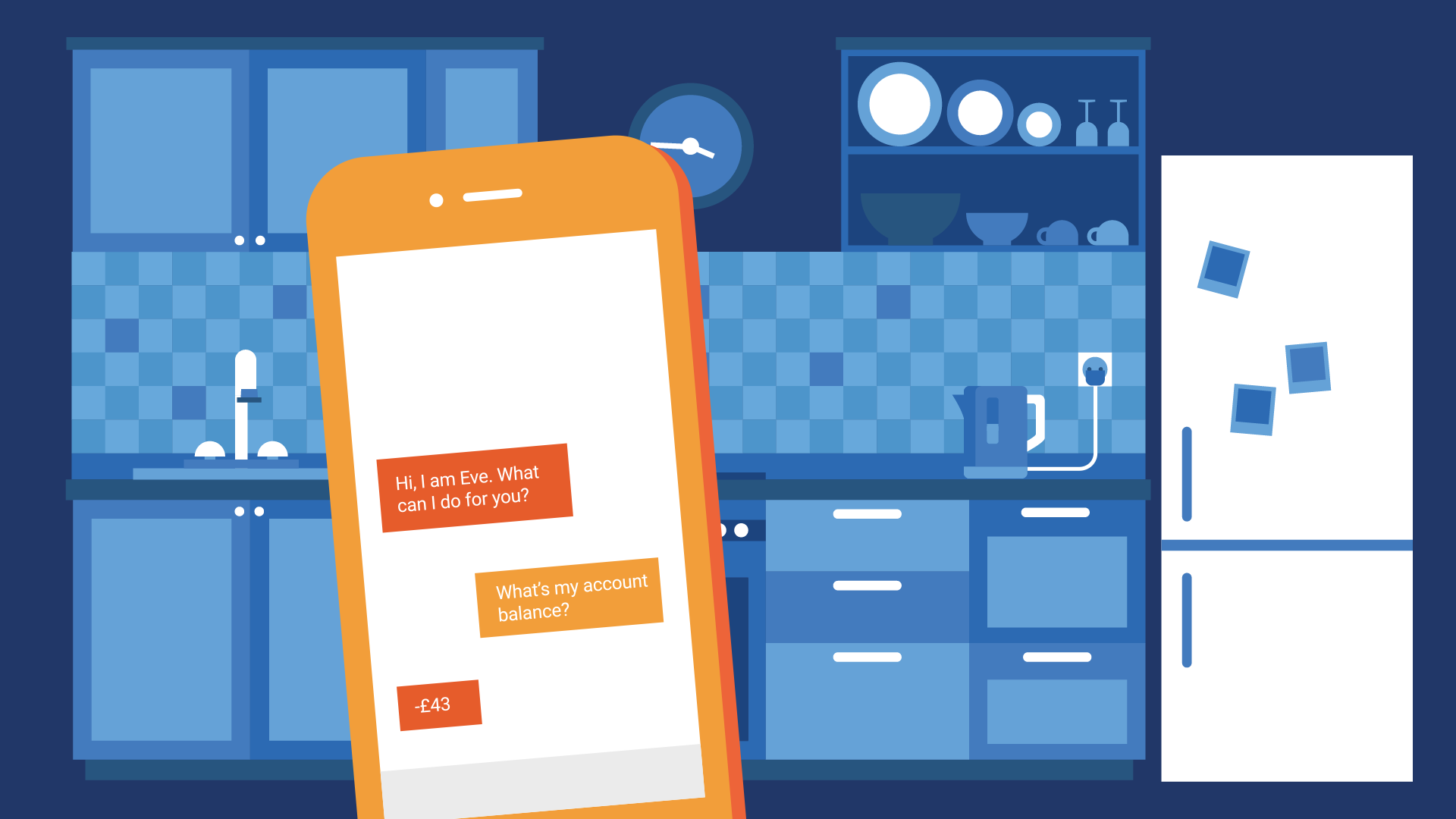

Although designing conversational experiences for voice and text platforms is different, as we started implementing scenarios into EVE - our Facebook Messenger bot - we've been able to confirm that choosing the right use-case is also important for text-based chatbots. Messaging platforms may be more established, less restrictive, and more forgiving in terms of user experience, but it doesn’t mean the user experience won’t be impacted by a bad choice of use-case. Bots should only be used where they can enhance customer experience, independently of the platform.

Facebook Messenger itself is a challenging platform. Its users have been accustomed to exchange one-to-one and group messages for many years, but introducing new concepts, such as chatbots, into an existing platform can be confusing and frustrating. Context is everything when using messaging apps, and it is a challenge to teach a bot to understand sarcasm, humour and replicate the syntax of everyday conversations. Realistically, we are a few years away from bots able to understand everything in every context, so being clear about what users can do and how they can do it, while talking to a bot, is the best way to make sure you don't end up with disappointed customers.

Another challenge to tackle with implementing bots is discoverability. Not everyone knows what a bot is, how to use them or even that bots are available on platforms such as Facebook Messenger. Our latest version of EVE includes a tutorial that users can opt to go through before being able to use the bot. The user interviews we conducted after introducing the tutorial have proven its benefits: users were less likely to ask something the bot can't do and they did not feel lost while using it.

So, what does the future hold? Chatbots are still in the early stages of development. We are a few years away from seeing them match the capabilities of humans when it comes to completing a set of tasks in a complex environment. However, we need only look to China to see the enormous potential of chatbots and messaging platforms. WeChat has 900 million daily active users and processes trillions of dollars of purchases. Thanks to Amazon’s push for its smart assistant, Alexa, voice-based bots seems more mature than their text-based friends in Europe, and today, customers can already find compelling experiences.

Related articles

EDF's Open Tariff APIs