Vision language models for Retrieval-Augmented Generation

With funding from the TI programme under EDF R&D’s Project AIDA, the Digital Innovation team have benchmarked Vision Language Models (VLMs) for Retrieval-Augmented Generation (RAG) to extract data from technical presentations.

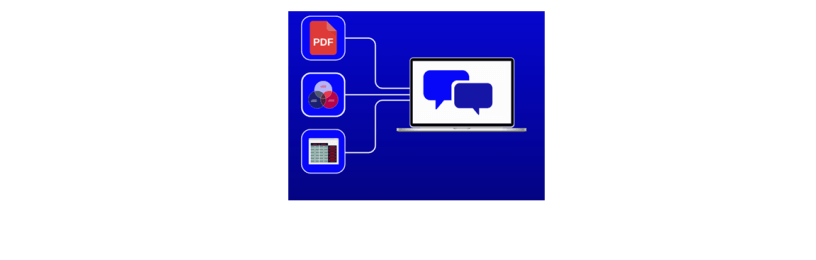

Large Language Models (LLMs) are becoming increasingly common in the workplace and are great tools for boosting productivity. However, most applications are currently focused on text-only interaction, while in reality, the types of documents encountered in the workplace are more complex including technical diagrams, tables, illustrations and others. Compared to traditional language models, vision language models (VLMs) are capable of processing documents with graphics such as images, tables and charts.

All you need is RAG

The study investigates the use of vision language models (VLMs) to process documents with not only text but also graphics. We focus on multimodal retrieval-augmented-generation (RAG) to answer questions about technical presentations (slides), which works in two parts.

A retriever which returns the most relevant data from the database based on a query.

A generator which answers the query by extracting key information on the relevant documents passed from the retriever.

Results of benchmarking models

We presented a benchmark of VLMs at a text mining seminar in October at La Défense, Paris. This was a technical conference discussing natural language processing within EDF entities such as Eifer, EDF France and EDF China R&D, containing both English and French. The models were evaluated on various metrics including accuracy, relevancy and readability. Our results indicate that these models can be reliably used for RAG within EDF, especially when extracting tabular information and flowcharts.

Next steps

Innovations like these are helping businesses become more efficient by reducing the manual work of inspecting complex slides, improving knowledge sharing and bridging any ambiguity to complex subjects. These findings open the door to the development of new applications within EDF, supporting a richer variety of documents.

Related articles

Leveraging AGR know-how for AMR success